Just Ralph it, baby!

This year started with a lot of beef. I gave a talk last year titled ‘The Claude Code Wars’, and it seems that we have now entered the second episode.

This is where it all began, roughly six months ago. Geoffrey Huntley wrote a blog post about how Ralphing works. It’s based on a simple idea: Pick a prompt, then let coding agents run on this prompt for as long as necessary, or until you are happy with the result. This approach became a meme, solving the “human-in-the-loop” problem that agents have today by brute-forcing the approach and burning tokens.

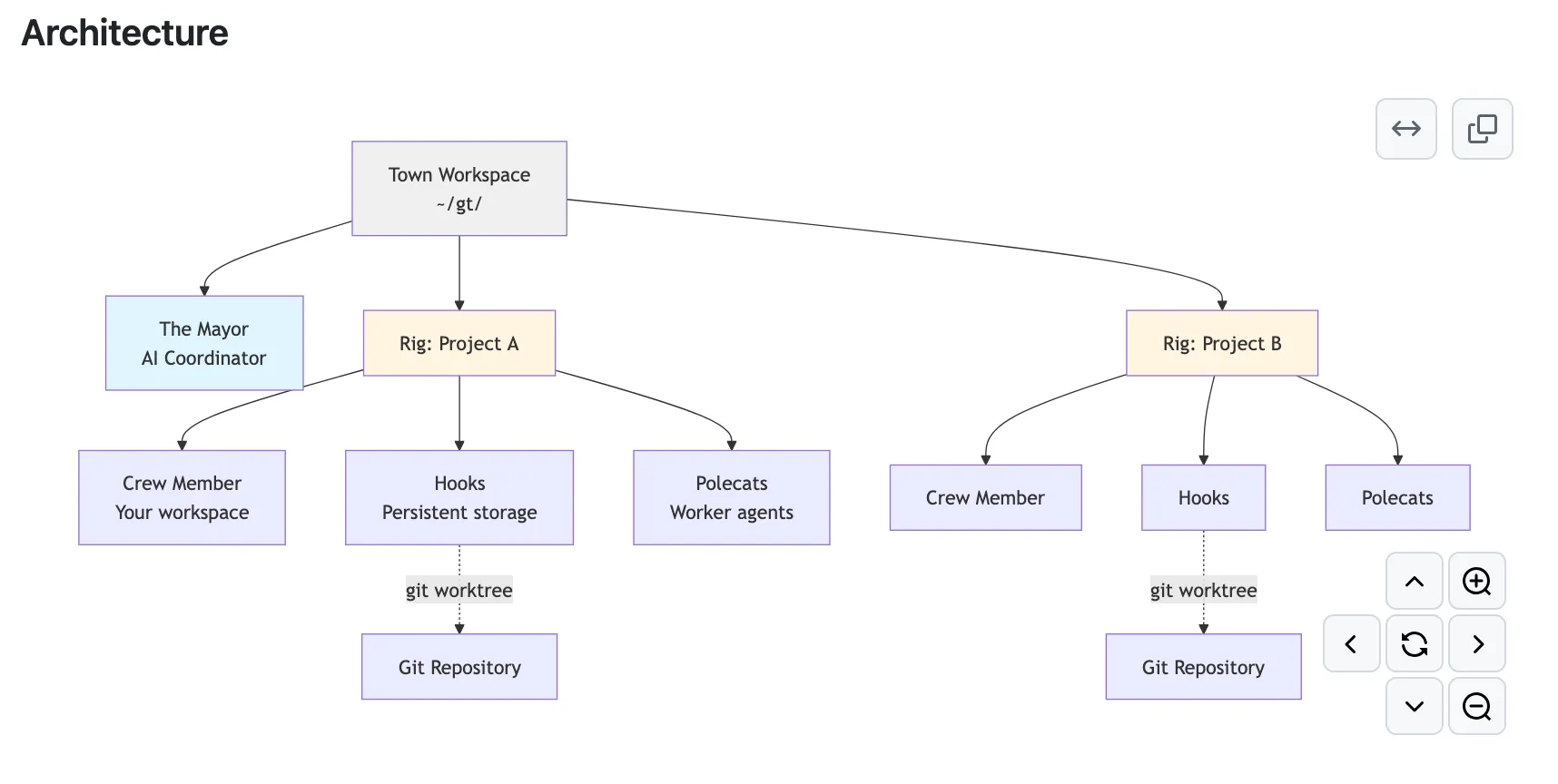

Fast forward to January and Gas Town by Steve Yegge is released. It’s a multi-agent orchestration system with the following architecture diagram:

If that sounds complicated, that’s because it is. You have one main agent to communicate with: the mayor. The mayor then commands a team of different roles to build your project. Apparently, people are using multiple Claude Max $200 plans simultaneously with this approach. It instantly became very popular.

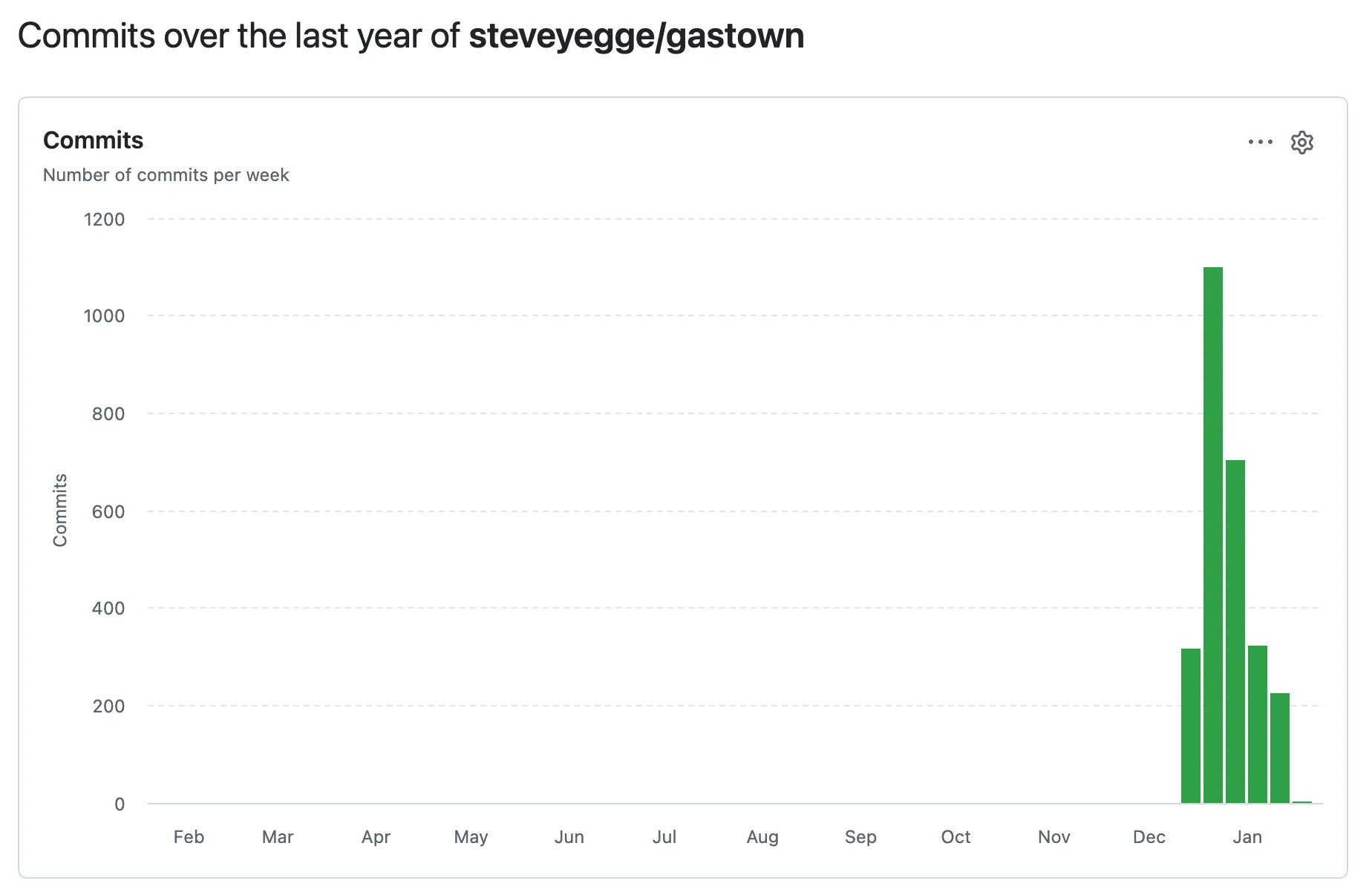

Gas Town was probably built using a similar approach. You can see in the Commits graph on GitHub that an absurd number of commits were created in a short amount of time.

Although Gas Town is more elaborate than Ralphing, the promise is the same: building software doesn’t require any skill anymore. It can be completely replaced by brute-forcing it with average agents. Give them enough time and your project will surpass any project written by humans.

On the other side of the arena are people with a deep commitment to software engineering who are trying to combine the power of their trained brains with that of agents. Peter’s post, Shipping at Inference-Speed, is an excellent summary and illustrates the extent to which standard software engineering practices have diverged in the last year.

Armin’s post delves deeply into the topic and is well researched. For example, Beads is an issue tracker created by the makers of Gas Town that uses 240k lines of code to track issues in a simple format.

Highly interesting Popcorn Time 🍿, watching everything from the sidelines? I don’t think so.

We’re seeing very different ways of using agents to assist with software project development. Unless you take the totally agnostic stance of not using agents at all, it’s impossible not to position yourself on some side here.

I’m investing my time and money in enhancing my existing software development skills with agentic tools like Claude Code or Codex. I don’t want to be completely replaced by brute force approaches. Fingers crossed! 🤞